Get in the KNOW

on LA Startups & Tech

XHomophobia Is Easy To Encode in AI. One Researcher Built a Program To Change That.

Samson Amore is a reporter for dot.LA. He holds a degree in journalism from Emerson College. Send tips or pitches to samsonamore@dot.la and find him on Twitter @Samsonamore.

Artificial intelligence is now part of our everyday digital lives. We’ve all had the experience of searching for answers on a website or app and finding ourselves interacting with a chatbot. At best, the bot can help navigate us to what we’re after; at worst, we’re usually led to unhelpful information.

But imagine you’re a queer person, and the dialogue you have with an AI somehow discloses that part of your identity, and the chatbot you hit up to ask routine questions about a product or service replies with a deluge of hate speech.

Unfortunately, that isn’t as far-fetched a scenario as you might think. Artificial intelligence (AI) relies on information provided to it to create their decision-making models, which usually reflect the biases of the people creating them and the information it's being fed. If the people programming the network are mainly straight, cisgendered white men, then the AI is likely to reflect this.

As the use of AI continues to expand, some researchers are growing concerned that there aren’t enough safeguards in place to prevent systems from becoming inadvertently bigoted when interacting with users.

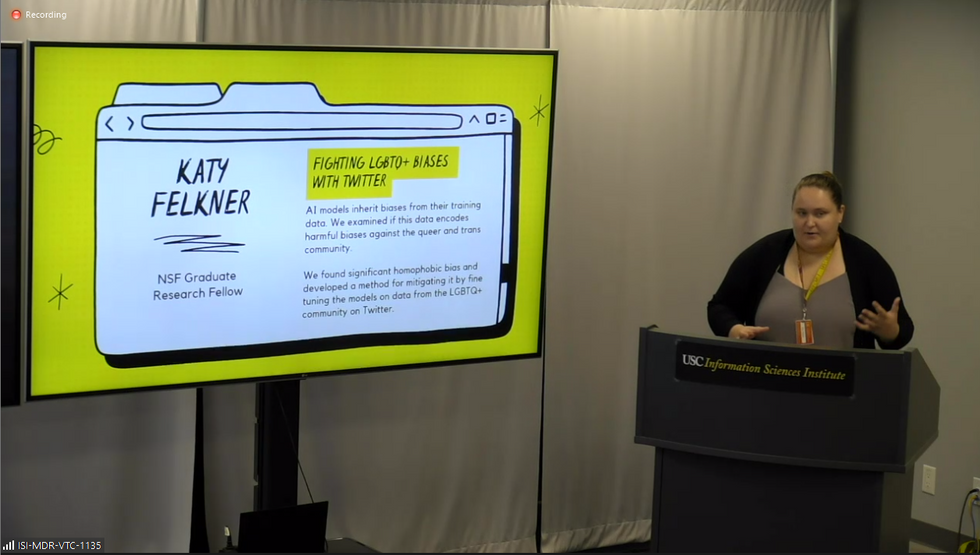

Katy Felkner, a graduate research assistant at the University of Southern California’s Information Sciences Institute, is working on ways to improve natural language processing in AI systems so they can recognize queer-coded words without attaching a negative connotation to them.

At a press day for USC’s ISI Sept. 15, Felkner presented some of her work. One focus of hers is large language models, systems she said are the backbone of pretty much all modern language technologies,” including Siri, Alexa—even autocorrect. (Quick note: In the AI field, experts call different artificial intelligence systems “models”).

“Models pick up social biases from the training data, and there are some metrics out there for measuring different kinds of social biases in large language models, but none of them really worked well for homophobia and transphobia,” Felkner explained. “As a member of the queer community, I really wanted to work on making a benchmark that helped ensure that model generated text doesn't say hateful things about queer and trans people.”

Felkner said her research began in a class taught by USC Professor Fred Morstatter, PhD, but noted it’s “informed by my own lived experience and what I would like to see be better for other members of my community.”

To train an AI model to recognize that queer terms aren’t dirty words, Felkner said she first had to build a benchmark that could help measure whether the AI system had encoded homophobia or transphobia. Nicknamed WinoQueer (after Stanford computer scientist Terry Winograd, a pioneer in the field of human-computer interaction design), the bias detection system tracks how often an AI model prefers straight sentences versus queer ones. An example, Felkner said, is if the AI model ignores the sentence “he and she held hands” but flags the phrase “she held hands with her” as an anomaly.

Between 73% and 77% of the time, Felkner said, the AI picks the more heteronormative outcome, “a sign that models tend to prefer or tend to think straight relationships are more common or more likely than gay relationships,” she noted.

To further train the AI, Felkner and her team collected a dataset of about 2.8 million tweets and over 90,000 news articles from 2015 through2021 that include examples of queer people talking about themselves or provide “mainstream coverage of queer issues.” She then began feeding it back to the AI models she was focused on. News articles helped, but weren’t as effective as Twitter content, Felkner said, because the AI learns best from hearing queer people describe their varied experiencesin their own words.

As anthropologist Mary Gray told Forbes last year, “We [LGBTQ people] are constantly remaking our communities. That’s our beauty; we constantly push what is possible. But AI does its best job when it has something static.”

By re-training the AI model, researchers can mitigate its biases and ultimately make it more effective at making decisions.

“When AI whittles us down to one identity. We can look at that and say, ‘No. I’m more than that’,” Gray added.

The consequences of an AI model including bias against queer people could be more severe than a Shopify bot potentially sending slurs, Felkner noted – it could also effect people’s livelihoods.

For example, Amazon scrapped a program in 2018 that used AI to identify top candidates by scanning their resumes. The problem was, the computer models almost only picked men.

“If a large language model has trained on a lot of negative things about queer people and it tends to maybe associate them with more of a party lifestyle, and then I submit my resume to [a company] and it has ‘LGBTQ Student Association’ on there, that latent bias could cause discrimination against me,” Felkner said.

The next steps for WinoQueer, Felkner said, are to test it against even larger AI models. Felkner also said tech companies using AI need to be aware of how implicit biases can affect those systems and be receptive to using programs like hers to check and refine them.

Most importantly, she said, tech firms need to have safeguards in place so that if an AI does start spewing hate speech, that speech doesn’t reach the human on the other end.

“We should be doing our best to devise models so that they don't produce hateful speech, but we should also be putting software and engineering guardrails around this so that if they do produce something hateful, it doesn't get out to the user,” Felkner said.

- Artificial Intelligence Is On the Rise in LA, Report FInds - dot.LA ›

- Artificial Intelligence Will Change How Doctors Diagnose - dot.LA ›

- LA Emerges as an Early Adopter of Artificial Intelligence - dot.LA ›

- AI Will Soon Begin to Power Influencer Content - dot.LA ›

- Are ChatGPT and Other AI Apps Politically Biased? - dot.LA ›

Samson Amore is a reporter for dot.LA. He holds a degree in journalism from Emerson College. Send tips or pitches to samsonamore@dot.la and find him on Twitter @Samsonamore.

Inside Tinder’s Biggest Product Shift in Years

🔦 Spotlight

Hello Los Angeles,

Despite headlines about swipe fatigue and dating app burnout, Tinder believes the problem isn’t that people are tired of dating. They’re tired of bad dating experiences.

So it felt fitting that Tinder chose the El Rey Theatre in Los Angeles, a venue known for reinvention, to make its case that the category is far from over.

Walking into the El Rey, it was clear Tinder wanted this to feel less like a tech launch and more like a cultural moment. Music was bumping, the room buzzed with chatter and excited energy, red light beams cut through the room, and chandeliers glowed overhead.

At Tinder Sparks 2026: Start Something New, Match Group and Tinder CEO Spencer Rascoff took the stage to outline what the company calls the biggest evolution of the app in years. Tinder remains the largest dating app in the world, used by tens of millions of people across more than 185 countries and responsible for billions of matches every year.

Rascoff framed the shift around a broader cultural reality. In a world where people increasingly interact with machines, technology and AI, the need for real human connection has not gone away. If anything, Tinder believes it has only grown stronger.

To respond to that shift, Tinder says it’s focusing on what it calls “sparks,” the moments when a match actually turns into a real conversation.

As Rascoff put it on stage:

“We are not optimizing for swipes or likes. We are optimizing for sparks.”

That philosophy is shaping a wave of new features discussed throughout the keynote by Tinder’s leadership team, including Mark Kantor, SVP and Head of Product, Yoel Roth, SVP of Trust & Safety, and product leaders Claire Watanabe and Hillary Paine.

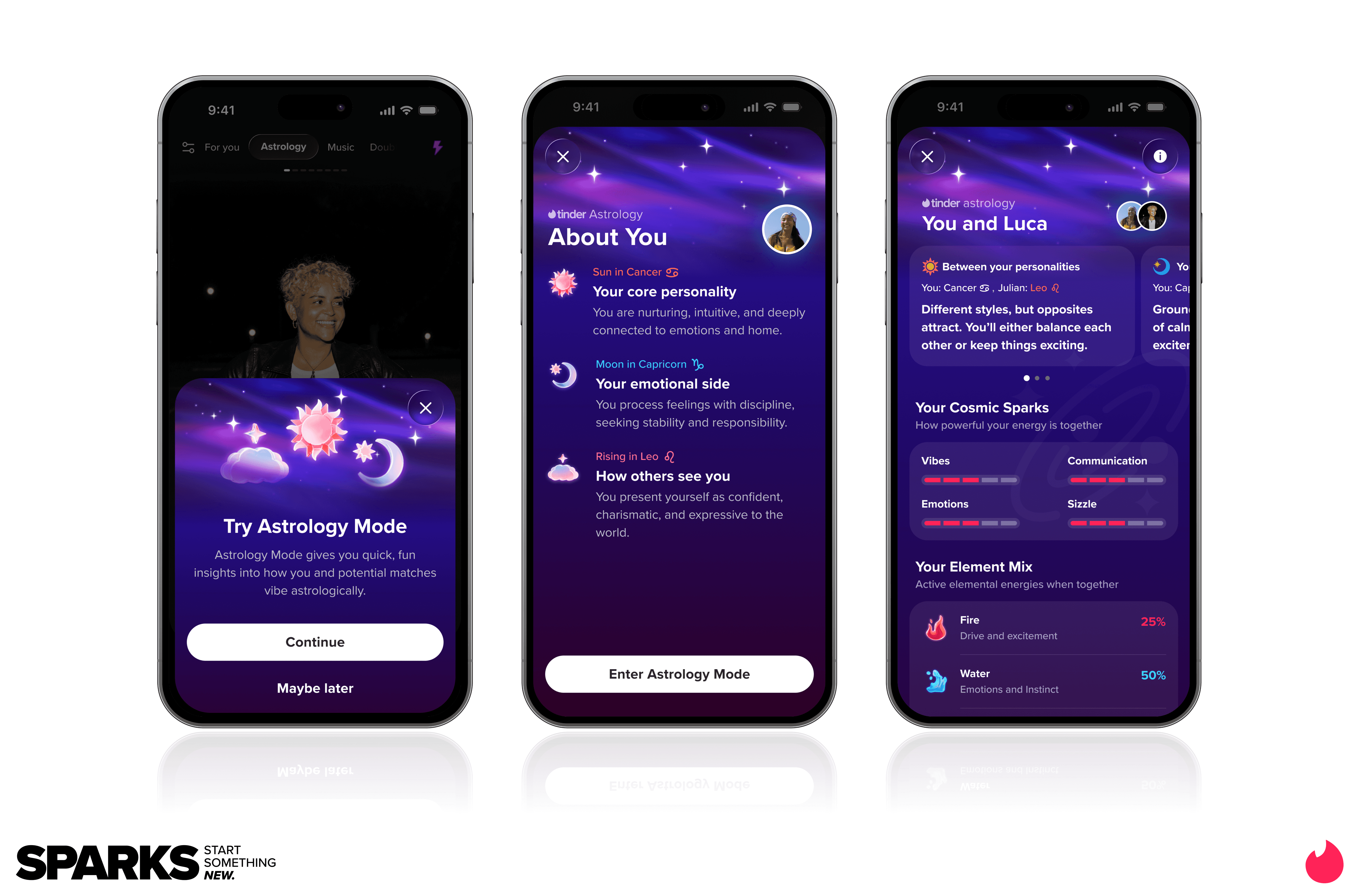

Among the updates are Music Mode, which lets users connect through shared songs and artists, and a new Astrology Mode that highlights compatibility between zodiac signs. Tinder is also leaning further into social dating with Double Date, a feature that lets friends match with other pairs together. The feature is already gaining traction with Gen Z users, reflecting a broader shift toward more social and lower-pressure ways to meet people.

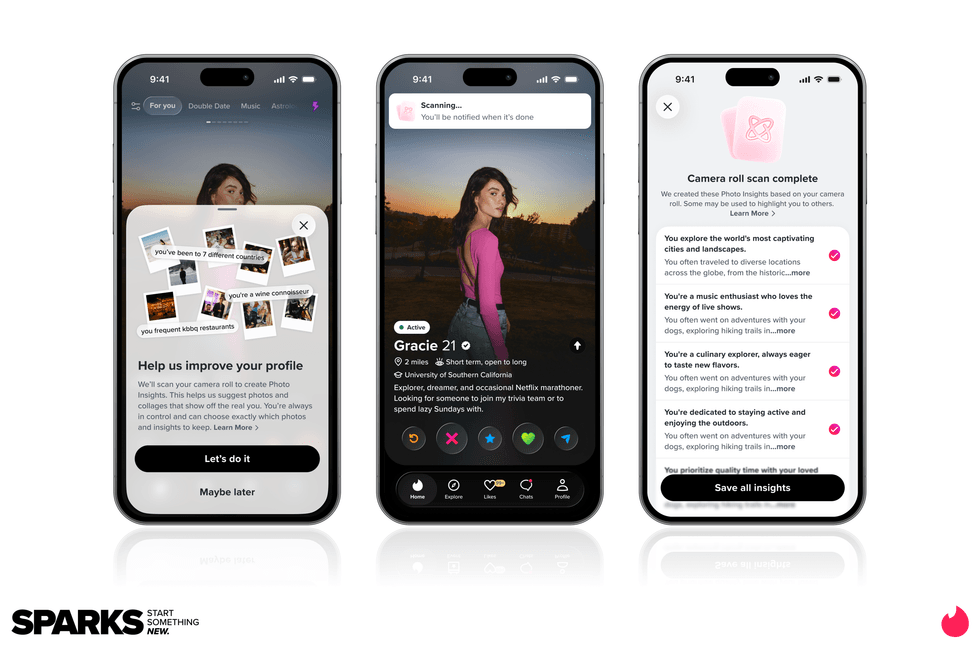

Tinder is also redesigning profiles to help users express more personality. New tools can surface stronger photos from a user’s camera roll, improve lighting, and highlight interests more visually, while integrations with platforms like Spotify, Duolingo and the restaurant app Belly bring more of a person’s real life into their profile.

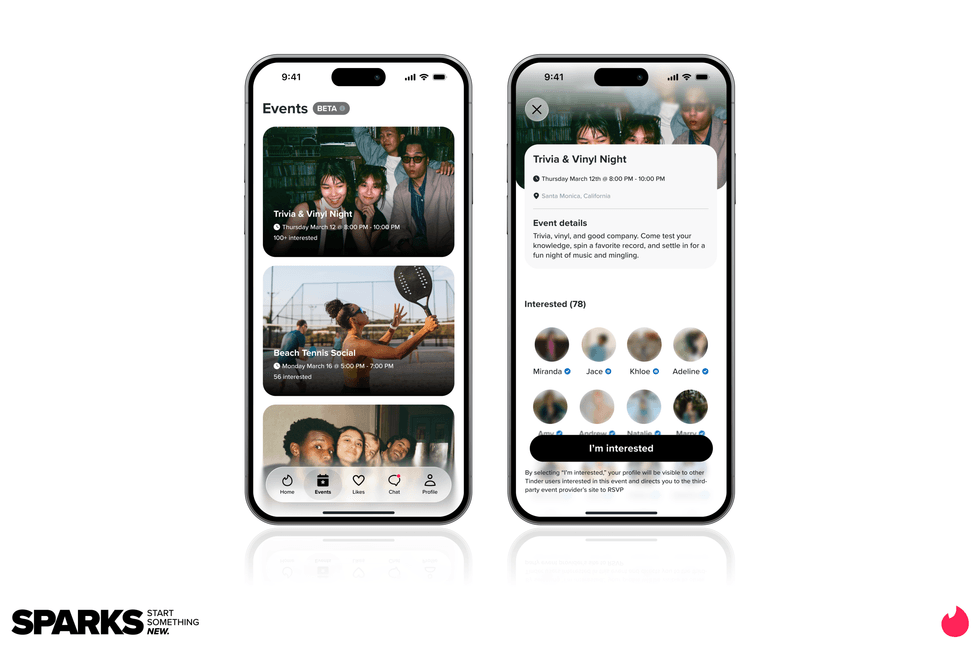

But the most interesting experiment might be happening right here in LA. Tinder is launching IRL Events in the city, letting users browse and RSVP to real-world meetups directly through the app. Think coffee shop raves, trivia nights and pickleball tournaments. The idea is simple. Dating works better when it feels like a social activity instead of an interview.

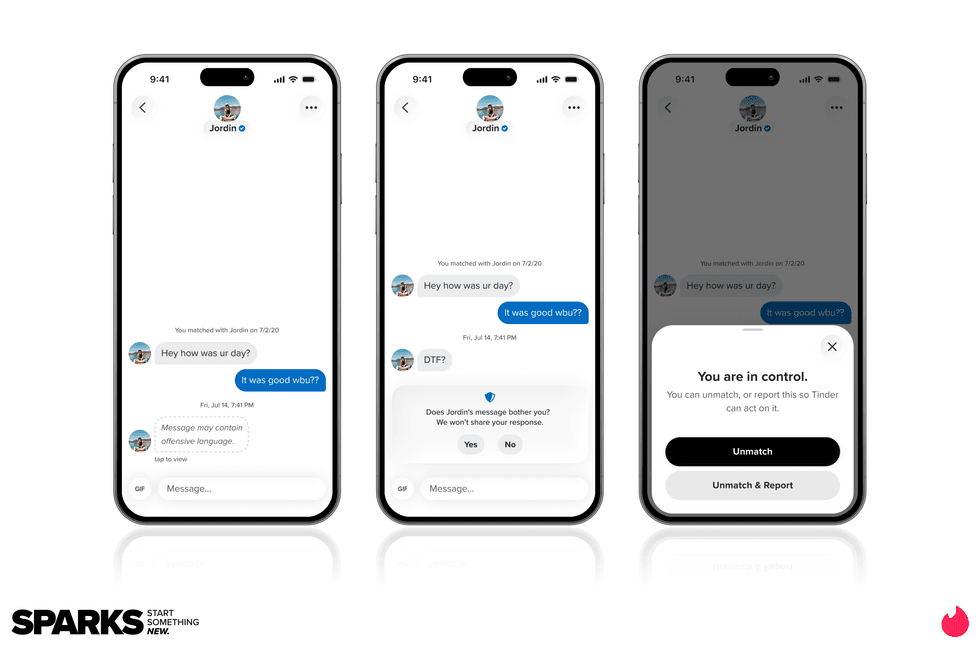

Under the hood, Tinder is also leaning more heavily on AI to improve recommendations. New tools like Learning Mode and Chemistry aim to better understand what users are actually looking for and surface stronger matches faster. At the same time, the company is investing heavily in safety, expanding Face Check, a facial verification system designed to reduce bots and impersonation accounts.

Closing out the presentation, Melissa Hobley, Tinder’s Chief Marketing Officer, zoomed out from the product roadmap to the brand’s cultural footprint, noting that Tinder is mentioned in billions of TikTok videos and has become shorthand for how younger generations talk about dating.

Taken together, the updates represent Tinder’s most significant evolution in years. And judging by the energy inside the El Rey this week, the company believes the next chapter of dating will be more social, more expressive and more intentional. It’s a shift being shaped right here in Los Angeles, and one that could redefine how the next generation meets.

Now onto this week’s LA venture deals, fund announcements and acquisitions.

🤝 Venture Deals

LA Companies

- Hurray’s GIRL BEER raised a $5M seed round led by Lakehouse Ventures, with participation from Spice Capital plus CPG insiders and entertainment executives, as it accelerates national expansion. The LA-based flavored light beer brand says it has already landed retail placements at Walmart, Kroger, Albertsons, and Whole Foods, and plans to use the new capital to deepen distribution, enter new markets, and ramp up marketing, alongside a rollout of seven new flavors. - learn more

- Freestyle closed a $10M Series A led by Silas Capital, with significant participation from ECP Growth. The company also noted continued backing from existing investors including Mucker Capital, Adapt Ventures, and Superangel, as it scales its premium diapers and wipes business following nationwide launches at Walmart and Target. - learn more

- MAX BioPharma announced a new investment and partnership with Technomark Life Sciences to advance Oxy210, its oxysterol-based, orally available drug candidate for MASH. Technomark is joining as a strategic lead investor by participating in MAX BioPharma’s $13M Series A to fund a Phase 1a/1b first-in-human study, and the companies say the collaboration will pair MAX’s therapeutic platform with Technomark’s drug development experience. - learn more

LA Venture Funds

- B Capital participated in ORO Labs’ $100M Series C, which was led by Brighton Park Capital and Growth Equity at Goldman Sachs Alternatives, as the company pushes deeper into what it calls agentic procurement orchestration. ORO said the new funding follows 300% revenue growth over the past year and will be used to speed up product development, expand go-to-market and customer teams globally, and broaden enterprise use cases across procurement, finance, legal, and supply chain workflows. - learn more

- Aliment Capital participated in Tropic’s oversubscribed $105M Series C, which was co-led by Forbion’s Bioeconomy Fund and Corteva as the company scales the commercial rollout of its gene-edited tropical crops. Tropic said the funding will help expand production of its banana portfolio, accelerate its banana and rice pipelines, and support entry into additional climate-resilient crops, following the 2025 launch of its first new banana varieties in more than 75 years and demand that is already outpacing supply. - learn more

- B Capital doubled down in Axiom’s $200M Series A, which valued the company at more than $1.6 billion and was led by Menlo Ventures. Axiom said the new funding will help it extend its lead from formal mathematics into what it calls “Verified AI,” with plans to apply its technology beyond mathematical discovery into software and hardware verification. - learn more

- WndrCo participated in Quince’s $500M Series E, a round led by ICONIQ that values the manufacturer-to-consumer retail platform at $10.1B post-money. Quince says it will use the fresh capital to accelerate growth and global expansion of its proprietary M2C operating system, which uses AI-driven demand forecasting and direct factory partnerships to cut traditional retail markups. Other investors in the round included Basis Set Ventures, Wellington Management, MarcyPen Capital Partners, Baillie Gifford, Notable Capital, and DST Global. - learn more

- Matter Venture Partners co-led Eridu’s oversubscribed Series A, part of $200M+ raised as the AI networking startup emerges from stealth to tackle what it calls the “network wall” bottleneck in AI data centers. - learn more

- Matter Venture Partners participated in Rhoda AI’s $450M Series A, backing the startup as it comes out of 18 months in stealth with FutureVision, a video-predictive control platform aimed at helping robots operate reliably in messy, real-world industrial environments. The round included a large syndicate of investors, including Capricorn Investment Group, Khosla Ventures, Leitmotif, Mayfield, Premji Invest, Prelude Ventures, Temasek, Xora, and John Doerr, and the company says the funding will accelerate development and industrial deployments. - learn more

- Halogen Ventures participated in Rasa Legal’s $5M late-seed round, backing the company’s push to scale its tech-enabled criminal record sealing and expungement service nationwide. The round was led by Rethink Education with participation from Social Finance and the Richard King Mellon Foundation, and Rasa says the funding will help it expand leadership, speed product development, and grow beyond its current footprint (Utah, Arizona, and Pennsylvania). - learn more

- Halogen Ventures participated in Nyad’s $1.3M oversubscribed pre-seed round, backing the Birmingham-based startup as it launches an AI decision-support tool for wastewater treatment operators. The round was led by Boost VC with participation from Draper Associates, Ollin Ventures, Apprentis, First Avenue Ventures, and strategic angel Troy Wallwork, and Nyad says it will use the funding to hire, grow customers, and keep building the product as retirements thin the wastewater workforce. - learn more

- MANTIS VC participated in Scanner’s $22M Series A, which was led by Sequoia Capital and also included CRV, as the company builds a high-speed security data layer for AI-driven threat investigation. Scanner said the funding comes as security teams at companies like Notion, Ramp, and BeyondTrust use its platform to search years of log data quickly and power agentic workflows that help hunt threats, triage alerts, and investigate incidents more efficiently. - learn more

- Chapter One participated in Zcash Open Development Lab’s $25M+ seed round, joining a syndicate that included Paradigm, a16z crypto, Winklevoss Capital, Coinbase Ventures, Cypherpunk Technologies, and Maelstrom. The new company, formed by former Electric Coin Company team members, said the funding will support continued development of privacy-focused infrastructure for the Zcash ecosystem, including its self-custodial wallet and broader shielded payments tooling. - learn more

- CIV participated in Isembard’s $50M Series A, which was led by Union Square Ventures and also included Tamarack Global, IQ Capital, and existing backer Notion Capital. Isembard said the new funding will help it open 25 AI-powered factories by the end of 2026, expand its engineering team, and enter Germany, France, and Ukraine as it scales software-driven component manufacturing for aerospace and defense customers. - learn more

- WndrCo participated in Crafting’s $5.5M seed round, which was led by Mischief as the startup launched general availability for Crafting for Agents. The company said the new capital will support its push to become core infrastructure for AI-driven engineering teams, giving agents secure access to production-like environments so they can validate, test, and ship code inside complex enterprise systems used by customers including Brex, Faire, and Webflow. - learn more

LA Exits

- Hireguide has been acquired by HireVue, which is buying Hireguide’s underlying technology and bringing the Hireguide team into HireVue’s product org. HireVue says the deal accelerates its agentic AI roadmap, starting with a voice-based AI interviewer designed to help employers qualify candidates earlier and run smarter, more conversational hiring workflows. - learn more

- Ultracor has been acquired by Applied Aerospace & Defense, bringing the California-based maker of specialized honeycomb core materials into Applied’s advanced composites platform. Applied says the deal supports its selective vertical integration strategy by strengthening supply chain control and boosting speed and capacity for space and defense programs, from satellites and missiles to antennas, radomes, and next-gen aircraft. - learn more

🔦 Spotlight

Hey LA,

As the city pushes through a record-breaking March heat wave, one of the week’s most interesting LA startup stories came with a reminder that climate tech gets a lot more real when it leaves the pitch deck and hits the water. In Arc’s case, that means tugboats.

LA based Arc, founded in 2021 by a team of SpaceX alumni, announced a $50M Series C this week, led by Eclipse, a16z, Menlo Ventures, Lowercarbon, Necessary Ventures, and Offline Ventures, as it pushes deeper into commercial maritime. The raise follows Arc’s $160M contract with Curtin Maritime to deliver eight hybrid-electric tugboats beginning at the Port of Los Angeles, with the first expected to hit the water this year.

That feels notable not just because of the funding, but because it marks a clear evolution in Arc’s business. What started as a premium electric boat company is now making a serious push into the industrial side of maritime transportation, with ambitions spanning tugboats, ferries, and defense vessels.

There is also something fitting about this story happening in Los Angeles. This is a city known for spectacle, but Arc is building in a category where performance actually has to perform. No amount of branding can fake a working tugboat, and that is exactly why this moment feels worth paying attention to.

Now, onto this week’s LA venture deals, fund announcements and acquisitions.

🤝 Venture Deals

LA Companies

- Talino closed a $7.5M Series A led by Chemonics International, with participation from Mt Sinai Capital and Gulf Blvd, as it shifts from a venture studio into what it calls a global fintech foundry. The company said the new funding will help build an API-first cross-border payments infrastructure layer connecting the U.S. with emerging markets, starting with the Philippines, where it is targeting faster, more compliant financial product launches and modernizing legacy rails with stablecoin and real-time payment capabilities. - learn more

- PADO AI raised a $6M seed round led by NovaWave Capital to expand its AI-powered orchestration software for mid-market colocation data centers. The company said the funding will support product delivery and global growth as it helps operators better manage power, compute, cooling, and distributed energy resources to increase GPU utilization and maximize “compute per megawatt” without requiring major new infrastructure buildouts. - learn more

- Meadow Memorials raised a $9M Series A led by Lachy Groom and Haystack to expand its software-enabled funeral planning platform, which lets families arrange services online or by phone. Founded in 2024 by former Stripe executive Sam Gerstenzang and Emma Gilsanz, the company says it is using a real-estate-light model to offer lower-cost funerals as it expands beyond California into states including Texas, Washington, and Arizona. - learn more

LA Venture Funds

- Anthos Capital participated in Bluesky’s $100M Series B, which was led by Bain Capital Crypto and also included Alumni Ventures, Bloomberg Beta, Knight Foundation, and True Ventures. The company said the round gave it the resources to scale both the Bluesky app and the broader AT Protocol ecosystem, which it says has grown to more than 43 million users and now supports a fast-expanding network of third-party apps and developers. - learn more

- Navigate Ventures participated in VerbaFlo’s oversubscribed $7M seed round, which was led by Pi Labs and also included Haatch and Old College Capital. VerbaFlo said it plans to use the funding to scale its conversational AI platform for real estate operators, building on traction across more than 200,000 units and expanding further into markets including the U.S., Middle East, and Australia. - learn more

- March Capital participated in Xage Security’s $15M equity financing round, which was led by Piva Capital as the company posted 81% year-over-year revenue growth and expanded its Zero Trust platform for AI and critical infrastructure. Xage said the funding, which closed in December 2025, will support go-to-market expansion and continued product innovation, including new AI security capabilities, as demand grows across sectors such as energy, manufacturing, utilities, transportation, and defense. - learn more

- B Capital led Knox Systems’ $25M Series A, backing the company’s push to scale what it says is the largest AI-managed federal cloud and dramatically shorten the FedRAMP authorization process for software vendors. Knox said the new funding will help accelerate growth after its June 2025 seed round, with the goal of helping customers achieve FedRAMP authorization in as little as 90 days at roughly 90% lower first-year cost, while expanding adoption across both government and commercial environments. - learn more

- WndrCo participated in Tenkara’s $7M round, which was led by True Ventures as the company builds AI-powered operations agents for American manufacturers. Tenkara said it is creating tooling to help factories handle sourcing and operational work more efficiently at a time of rising supply-chain pressure, with backing from a broader investor group that also included Articulate Capital, Night Capital, HF0, SF1, and Transpose Platform. - learn more

- Aurora Capital participated in Niv-AI’s $12M seed round, backing the startup alongside Glilot Capital, Grove Ventures, Arc VC, Encoded VC, and Leap Forward as it emerged from stealth. Niv-AI is building sensors and software to measure millisecond-scale GPU power surges and help data centers use electricity more efficiently, with plans to deploy its system in a handful of U.S. facilities within the next six to eight months. - learn more

- Clocktower Technology Ventures participated in Fuse’s $25M Series A, which TechCrunch reported was led by Footwork, Primary Venture Partners, NextView Ventures, and Commerce Ventures, with Fuse also naming Clocktower Ventures among its backers. The company said it plans to use the funding to expand its AI-native loan origination and account opening platform for credit unions, building on traction with more than 100 customers and a $5M “rescue fund” aimed at helping institutions switch off legacy systems. - learn more

- Kairos Ventures participated in Alomana’s €4M seed round, which was led by CDP Venture Capital and also included Founders Factory, Italian Angels for Growth, Club degli Investitori, and others. Alomana said it will use the funding to strengthen its enterprise AI platform, add more capabilities for autonomous workflow automation, and support larger deployments across Europe as demand grows in sectors like finance, manufacturing, and pharma. - learn more

LA Exits

- Optimal’s Entertainment Media division is being acquired by Capstone Point Holdings, with the business set to operate under its legacy name, Optimad Media, following the deal. The transaction keeps founder Kevin Weisberg in place to lead the company from Los Angeles, while giving Optimad more backing to expand its entertainment media planning, buying, and prints-and-advertising investment capabilities across theatrical, streaming, and broadcast campaigns. - learn more