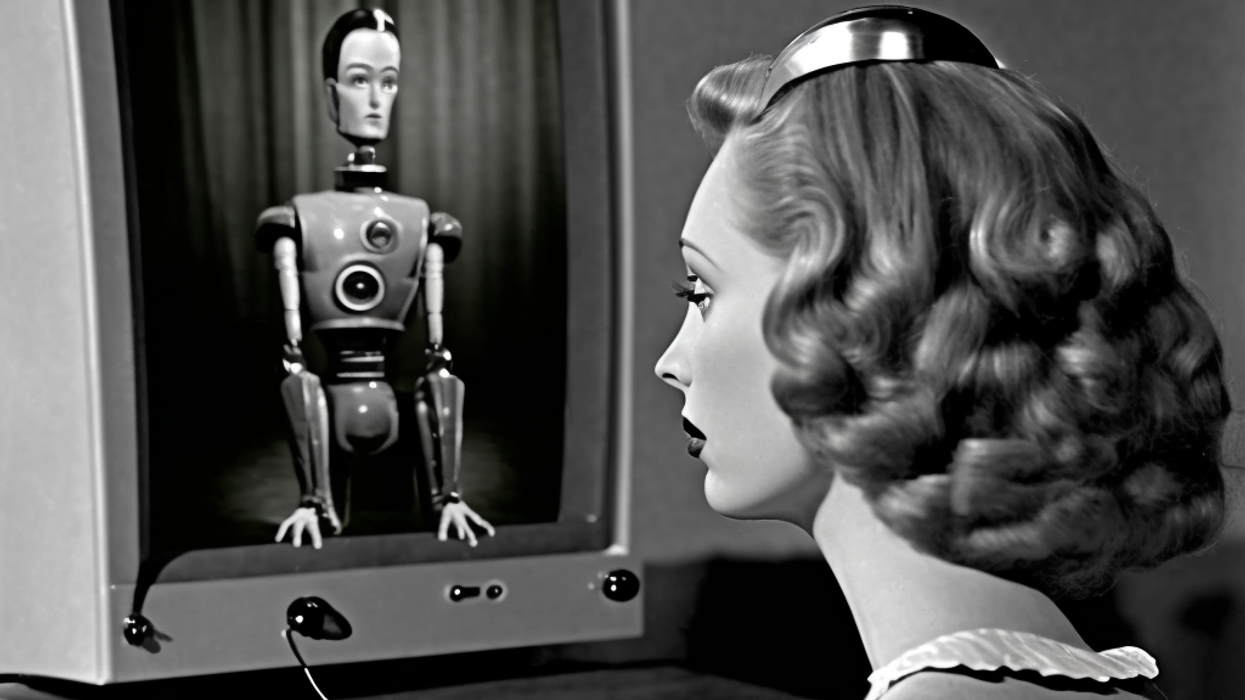

AI Chatbots Aren’t 'Alive.' Why Is the Media Trying to Convince Us Otherwise?

This is the web version of dot.LA’s daily newsletter. Sign up to get the latest news on Southern California’s tech, startup and venture capital scene.

Last week, former Google and Alphabet executive chairman Eric Schmidt, MIT computer science professor Daniel Huttenlocher, and retired political advisor and diplomat Henry Kissinger (yup, that one) co-authored an editorial for the Wall Street Journal. The headline announced that “ChatGPT heralds an intellectual revolution” which the authors compare to the historical Age of Enlightenment.

If it’s been a bit since you brushed up on your world history, the Enlightenment was the intellectual and philosophical movement that took place across 17th and 18th Century Europe, which included major advancements and innovations like René Descartes rationalist philosophy, Newtonian physics and mathematics, Adam Smith’s “The Wealth of Nations,” and pretty much all of the political and social ideas which informed both the French and American Revolutions. In other words, comparing anything to the Age of Enlightenment is a big claim. The authors further compare generative AI applications, specifically OpenAI’s ChatGPT, with historical developments on the level of Gutenberg’s printing press, and argue that its significance “transcends commercial implications or even noncommercial scientific breakthroughs.”

Rather than basing the bulk of their argument on data, or any kind of specific observations about the way ChatGPT currently operates, the authors make a more philosophical, even metaphysical, case. They write that, because software like ChatGPT works by drawing connections and patterns between a vast number of sources and primary texts, “the precise sources and reasons for any one representation’s particular features remain unknown.”

For Schmidt, Huttenlocher, and Kissinger, the mystery is the key. That, even the humans that built ChatGPT can’t ever fully understand exactly why the program issued its specific responses, as opposed to variations based on different source materials, the authors argue, represents an entirely new kind of thinking and cognition. “AI,” they claim, “when coupled with human reason, stands to be a more powerful means of discovery than human reason alone.”

This may ultimately prove accurate, of course, but the authors are largely taking it on faith. They offer fanciful future predictions about generative AI like this one: “It will alter many fields of human endeavor, for example education and biology. Different models will vary in their strengths and weaknesses. Their capabilities—from writing jokes and drawing paintings to designing antibodies—will likely continue to surprise us.”

While it’s true that no humans are picking about the full predictive maps of all patterns and sources that ChatGPT uses to respond to each query, it’s not exactly the unknowable mystery box that the authors imply. The app makes sense of human writing by reading thousands upon thousands of examples, then works out how to compose original sentences via guesswork, using math to “predict” which words should appear one after another in which order, with the explicit purpose of mimicking human speech. It doesn’t understand what it’s saying contextually at all, and making the implication that the software is capable of “writing jokes” comes right up to the edge of being actively misleading. Can you write a joke if you don’t understand what you’re saying, or why things are funny?

Schmidt, Huttenlocher, and Kissinger aren’t alone in extolling the wonders of AI and looking ahead to a promising future for the technology. In fact, this seems to be the only story a lot of publications are eager to tell about AI. A quick Google search for the latest news about AI apps, particularly if you add the secondary keyword “predictions,” produces a bevy of hopefully optimistic results promising everything from improved digital assistants to fully functional driverless cars to 90% of Hollywood content being designed by bots.

Even when raising potential red flags around the technology, a lot of press reports start out with some basic assumptions. Namely, that artificial intelligence is already here, it’s going to very quickly get much much more sophisticated, and therefore will prove a significant potential threat to jobs and cybersecurity and social equity and our sense of self as humans and possibly even our very lives.

This, of course, echoes some of the statements made by OpenAI co-founder Elon Musk close to a decade ago. On CNBC’s “Closing Bell” in 2014, Musk said he keeps a cautious eye on AI development due to concern about “scary outcomes,” name-checking James Cameron’s “Terminator” franchise. (That’s the one about a future in which artificially intelligent defense system SkyNet declares war on humanity and sends robots through time to kill off our leaders.) In 2017, two years after starting OpenAI, Musk tweeted that the primary aspiration for those working with the technology is “to avoid AI becoming the other.”

As anyone who has seen one of those Boston Dynamics demos, that’s a scary thought. No one wants to fistfight a machine. Still, there’s another story about OpenAI and ChatGPT and these latest developments which isn’t getting nearly the same level of coverage as their peak decade-plus moonshot potential or even their inherent dangers when fully realized. Viral apps like ChatGPT, Microsoft’s new AI-assisted Bing search engine, and OpenAI’s DALL-E image generator may be fascinating and useful, but they don’t actually represent “artificial intelligence” as most readers have come to understand the concept.

For all that they're thrown around on the 2023 Internet, terms like “machine learning” and “artificial intelligence” haven’t been clearly defined and aren’t used in any kind of consistent way. When some app developers or computer scientists say “artificial intelligence,” what they really mean is any kind of mechanical or digital application that can perceive, synthesize, and infer information. Some technologies that meet this definition have been around long enough to become relatively mundane. Take optical character recognition, in which a computer can scan a handwritten letter and turn it into a text document. That meets the definition of AI but you’re not trying to have a conversation with your scanner.

But when a lot of laypeople use or read the term “artificial intelligence,” they’re thinking of something specific. Very likely, many of them are thinking of what’s known as “Artificial General Intelligence” or AGI, which is basically a digital mind that’s capable of the same kinds of understanding as a human (or, in some cases, an animal) brain. Many academics and researchers consider this the same thing as achieving true consciousness and self-awareness, though there are some nuances and distinctions here that are too complicated to get into here.

When Elon Musk frets on TV about SkyNet, it’s actually AGI that he’s worried about. Ideas about artificial general intelligence also tend to get caught up in notions about The Singularity, a hypothetical point in the future when technology becomes capable of self-innovation and humans entirely lose control over our creations. This may all very well be coming down the road one day. But we’re not there yet. A 2022 survey of experts in the field of technology and AI research found that around 50% believe high-level machine intelligence will occur by 2059, long after future humans will have become bored with trying to get Bing search to tell them a joke or call them a racial slur.

But for the most part, the technology press seems more interested in cheerleading, exchanging wide-eyed predictions about our automated future for cheap clicks. Rather than helping readers to understand where AI development is now and where it could one day go, breathlessly excited headlines extoll the wonders on our doorstep and invite misleading comparisons between sophisticated prediction algorithms and sentient computers.

Chatbots have certainly come a long way since their debut in the 1960s and ‘70s, and it’s easy to conceive of scenarios in which they can be expressly helpful. But they are not alive and they do not have feelings, and it’s basically impossible for this development to even occur in their current form. Giving them names like “Sydney” and posing queries that encourage them to go off-script and have seemingly-emotional reactions to prompts can make them feel “alive” but it’s only an illusion. ChatGPT is far closer to Clippy 3.0 than Samanta the sexy operating system from “Her.”

It’s understandable of course why founders and entrepreneurs would be interested in selling the public on the grandest possible vision of AI and what it can do. When OpenAI CEO Sam Altman tweets about “the amount of intelligence in the universe” doubling every 18 months and his personal plans for AGI, he’s got a company to promote and investors to please. It’s traditionally been the role of journalists and the media to step in and actually clarify where we are and how close we’ve actually come to achieving those goals, so the entire world doesn’t get completely carried away by the hype.

- AI Had a Bad Week. What Does That Mean for Its Future? ›

- Prediction: AI Is Just Getting Started. In 2023, It Will Begin to Power Influencer Content ›

- Microsoft Looks to Invest $10B in OpenAI ›

- How Redditors Successfully 'Jailbroke' ChatGPT - dot.LA ›

- Generative AI: Tech Startup Savior or Buzzy Nothingburger? - dot.LA ›

- Generative AI: Tech Startup Savior or Buzzy Nothingburger? - dot.LA ›

- How AI Is Advancing and What This Means for the Future - dot.LA ›

- BENLabs Is Using AI To Help Smaller Creators Scale - dot.LA ›

- From Hype to Backlash: Is Public Opinion on AI Shifting? - dot.LA ›

- RNC Responds to Biden Reelection Bid with AI-Generated Ad - dot.LA ›

- How Influencers Are Using AI for Styling Advice - dot.LA ›

- Why OpenAI CEO Sam Altman Believes AI Needs Regulations - dot.LA ›

Image Source: Apex

Image Source: Apex

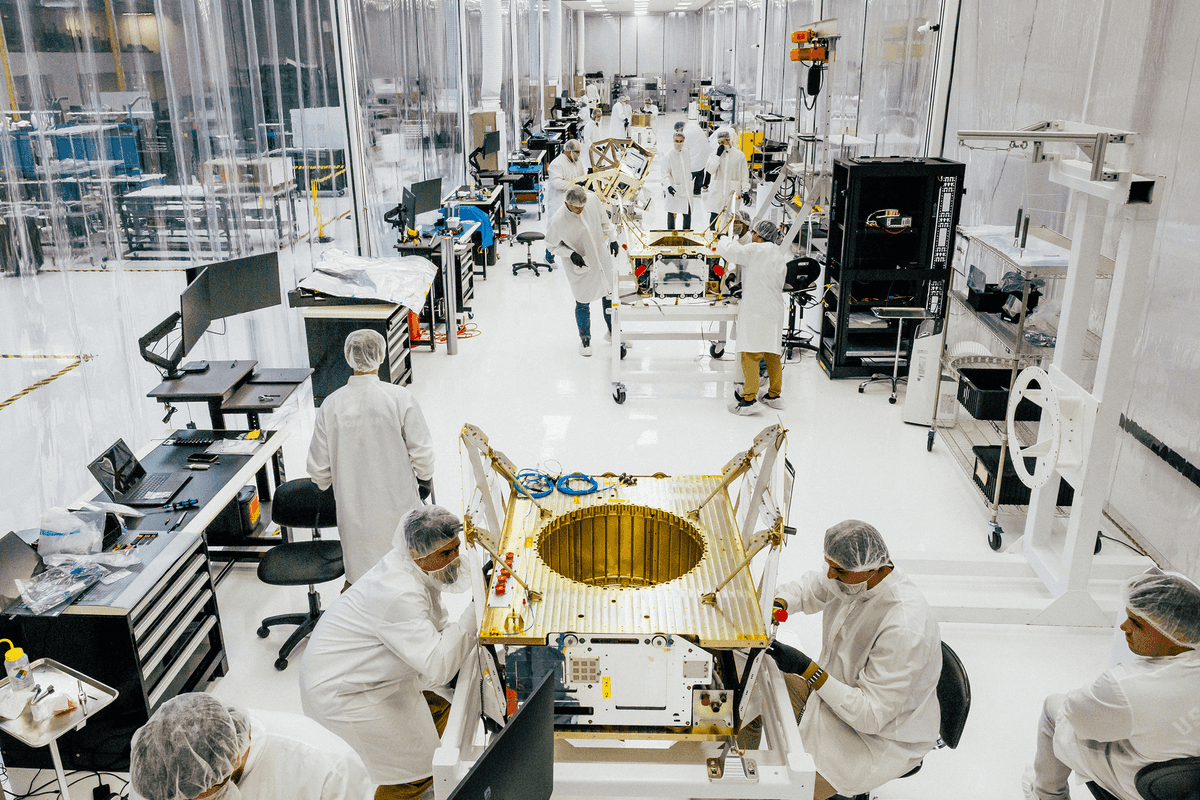

Image Source: Observable Space

Image Source: Observable Space