NASA and SpaceX Set to Make History With Landmark Spaceflight During Pandemic

GeekWire contributing editor Alan Boyle is an award-winning science writer and veteran space reporter. Formerly of NBCNews.com, he is the author of "The Case for Pluto: How a Little Planet Made a Big Difference." Follow him via CosmicLog.com, on Twitter @b0yle, and on Facebook and MeWe.

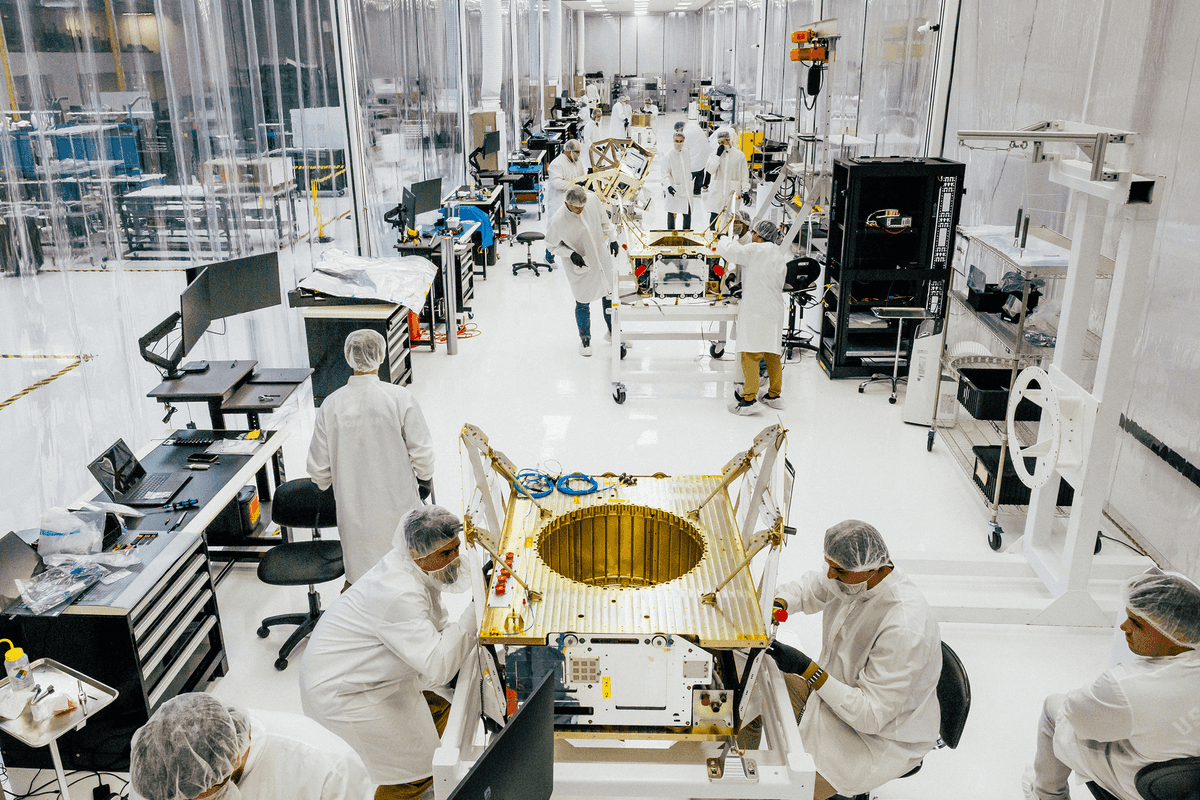

Everything is in readiness for the first mission to send humans into orbit from U.S. soil since NASA retired the space shuttle fleet in 2011 – from the SpaceX Crew Dragon capsule that will take two astronauts to the International Space Station, to the parachutes that will bring them back down gently to an Atlantic Ocean splashdown, to the masks that NASA's ground team will wear in Mission Control.

The fact that the launch is coming in the midst of the coronavirus pandemic has added a weird and somewhat wistful twist to the history-making event.

"That certainly is disappointing," NASA astronaut Doug Hurley, who'll be spacecraft commander for the Crew Dragon demonstration mission, told reporters today during a mission preview. "An aspect of this pandemic is the fact that we won't have the luxury of our family and friends being there at Kennedy to watch the launch. But it's obviously the right thing to do."

NASA is asking people not to show up in person to watch the liftoff, currently scheduled for 4:32 p.m. ET (1:32 p.m. PT) May 27 at Kennedy Space Center in Florida.

"The challenge that we're up against right now is, we want to keep everybody safe," NASA Administrator Jim Bridenstine said. "That's the No. 1 highest priority of NASA, keeping people safe, and so we're asking people not to travel to the Kennedy Space Center. And I will tell you, that makes me sad to even say it. Boy, I wish we could make this into something really spectacular."

Highlighting Our Upcoming Launch of Astronauts from Florida on This Week @NASA – May 1, 2020 youtu.be

Instead, NASA is asking the public to tune into streaming coverage of the journey to the space station, which will run continuously from before launch to the docking 19 hours after liftoff.This month's milestone mission is aimed at testing all the systems on the SpaceX Crew Dragon during crewed flight for the first time. It's known as Demo-2, because the flight follows up on Demo-1, an initial uncrewed demonstration mission that was flown successfully in March 2019.

Hurley and his Dragon crewmate, Bob Behnken, will work alongside the other residents of the space station for at least a month – and perhaps for as long as four months, depending on how smoothly the mission goes and how quickly a follow-up Crew Dragon mission comes together.

The ultimate limiting factor has to do with how long the Dragon's power-generating solar arrays last before they degrade in the harsh conditions of space. Engineers figure 119 days is the maximum.

If the flight is a success, Crew Dragon spacecraft will be flying regular missions to and from the space station for years to come, marking the end of an era when NASA had to rely exclusively on the Russians to put its astronauts in orbit, at a cost that has ranged beyond $80 million a seat.

Demo-2 is as much of a milestone for SpaceX as it is for NASA. It'll be the first crewed flight for the 18-year-old space company founded by billionaire Elon Musk.

"We were founded in 2002 to fly people to low Earth orbit, the moon and Mars, and NASA has certainly made that possible," said Gwynne Shotwell, SpaceX's president and chief operating officer.

After the decades-old space shuttles were retired, NASA selected SpaceX and Boeingto build relatively low-cost space taxis to ferry astronauts to and from the space station. SpaceX went with an upgraded version of its Dragon capsule, which has been making orbital cargo runs for NASA since 2012. Boeing developed a brand-new spacecraft, the CST-100 Starliner, which is being fine-tuned in the wake of last year's flawed test flight.

Just today, SpaceX checked off one of the last critical items on its preflight checklist: the 27th and final test of the Crew Dragon's Mark 3 parachute system.During the next few weeks, NASA and SpaceX will continue scrutinizing the Crew Dragon and its Falcon 9 rocket. Kathy Lueders, program manager for NASA's Commercial Crew Program, said all of the technical issues are being resolved – including checking to make sure the Falcon 9's engines won't fall prey to the kind of failure that cropped up during a launch last month.

Concerns about COVID-19 are adding a new dimension to the safety measures traditionally required for spaceflight.

"We've been going through a number of precautions with Bob and Doug as the coronavirus pandemic has been in place for a few months," said Steve Stich, the Commercial Crew Program's deputy manager. "We have minimized contact with them for weeks now. … They only come to certain training events where they really need to be present."

Stich said anyone who comes into close proximity to Behnken and Hurley during training has to go through medical checks, and wear a mask and gloves. Some mission simulations that are typically conducted in person are being done over high-speed data connections instead.

"I would really say that our quarantine period, instead of being two weeks, has really stretched into closer to 10 weeks," Behnken told GeekWire during a post-briefing telephone interview.

"We're taking temperatures, we're wearing masks in public areas, we are social distancing as well," SpaceX's Shotwell said. "We've got at least half our engineering staff working from home. Actually, more than that. And for those that can't work from home, we've got protective gear for them to be able to get their jobs done."

NASA's Stich said the layout of Mission Control has been changed to ensure at least 6 feet of distance between ground controllers. "We go in and clean those rooms ahead of time with sanitizer. … And then, in between shifts, we make sure we clean things for the next group of flight controllers and operators," Stich said.

Behnken said it's been challenging to manage the complications associated with the pandemic while preparing for the space mission.

"Of course, people have had to change their lifestyles," he said. "We're conducting schooling from home for my son as we continue out through the school year. So, really trying to avoid the pandemic becoming a distraction – at the same time that we take all the appropriate precautions that science and prudence would dictate – has just been something we've had to incorporate."

This story first appeared in GeekWire.

GeekWire contributing editor Alan Boyle is an award-winning science writer and veteran space reporter. Formerly of NBCNews.com, he is the author of "The Case for Pluto: How a Little Planet Made a Big Difference." Follow him via CosmicLog.com, on Twitter @b0yle, and on Facebook and MeWe.

Image Source: Apex

Image Source: Apex

Image Source: Observable Space

Image Source: Observable Space