Can the Grid Survive the Coming Onslaught of Electric Vehicles?

David Shultz reports on clean technology and electric vehicles, among other industries, for dot.LA. His writing has appeared in The Atlantic, Outside, Nautilus and many other publications.

Early last month during an historic heatwave, Southern California teetered on the brink of grid collapse and the threat of blackouts loomed for several days. The crisis was averted thanks to a variety of factors, but pleas from grid operators and Governor Newsom for Californians to conserve energy were integral to the effort—officials provided citizens with a laundry list of strategies to conserve power, including turning off air conditioning and unplugging unused appliances. But the suggestion to refrain from charging electric vehicles instantly drew an outsized amount of political attention. Not least since the heatwave came just days after the California Air Resources Board announced its intent to phase out fossil fuel car sales entirely by 2035. Naturally, critics of electric vehicles used the incident as a way to paint the transition as a wasteful pipedream.

The question of whether the grid can survive an EV takeover, however, is a valid one. You don’t need me to tell you that the grid is already stressed and new EVs will represent an additional load. But fortunately there are people who are studying these exact questions. Last week, in a new study in Nature, Stanford Researcher Siobahn Powell and her colleagues released what may be the most comprehensive study to date modeling how the transition to EVs might unfold and whether we’ll be able to produce, store and distribute enough electrons to keep up.

The results are encouraging, painting full electrification as a very realistic and achievable target. Under President Biden’s goal to move the U.S. to 50% EV adoption by 2035, the authors calculate that this would only increase total electricity consumption by 5% compared with adding no EVs. In fact, the researchers show that each percent increase in EV adoption increases total consumption by about 0.11% in this system. “It's not like it’s doubling the electricity we deliver now,” says Powell. “It's relatively small, which is part of why it's feasible.”

Nonetheless, according to the modeling, some electrification strategies are superior to others. In particular, Powell and his team report that moving EV charging to the daytime would require less investment in storage and generation, and would dramatically reduce the carbon footprint of the transition.

This is the opposite of what’s currently happening. Most early adopters charge their cars at home at night. Right now, this strategy makes sense as electricity is cheap during non-peak hours and charging at night lets drivers wake up with a full battery in the morning. And since EVs remain expensive, most early adopters are wealthier and live in single family homes where installing a charger is feasible.

The issue with charging at night, however, is that solar and wind energy production are at their lowest during those hours. The result is that a higher fraction of our electricity comes from coal and natural gas. As EV adoption ramps up, overnight electricity will get more expensive and dirtier.

Conversely, energy is most abundant, cheapest, and cleanest from late morning to early afternoon. As such, shifting to daytime charging makes it cheaper, cleaner, and less stressful for the grid. Though it will require careful planning and incentives to help facilitate the shift. Powell says one of the main takeaways from the study is just the sheer number of new charging stations that will be required as the state and the country transition to EVs. “It's millions more charging stations, so on its own, that's a big challenge,” he says. “But in particular, making decisions to support, subsidize, help build out more daytime charging options, I think is really important.”

Now then is the time to start planning our infrastructure rollout to reduce the impact of these issues. According to the team’s models, the United States will need millions of new charging stations before 2035 to accommodate the shift to EVs. Incentivizing daytime charging by placing those stations strategically–at businesses, retail hubs, restaurants, and office parks–could dramatically reduce the cost of electricity for drivers. Not to mention the strain on the grid and the amount of fossil fuels we need for transportation.

“Traditionally, transportation, and the grid have been two separate sectors, and now they're being coupled,” says Powell. “So we have to think about how policies in one space, like building charging stations, impacts the other.”

- The Impact of an EV Takeover On California's Power Grid - dot.LA ›

- California Dodged Rolling Blackouts With Help From a Text - dot.LA ›

- Here's What EVs Are Doing to California's Energy Grid - dot.LA ›

- C02 Emissions Saved by Using EVs For Holiday Travel - dot.LA ›

- C02 Emissions Saved by Using EVs For Holiday Travel - dot.LA ›

- Tesla’s Semi Truck Could End Natural Gas Trucks - dot.LA ›

David Shultz reports on clean technology and electric vehicles, among other industries, for dot.LA. His writing has appeared in The Atlantic, Outside, Nautilus and many other publications.

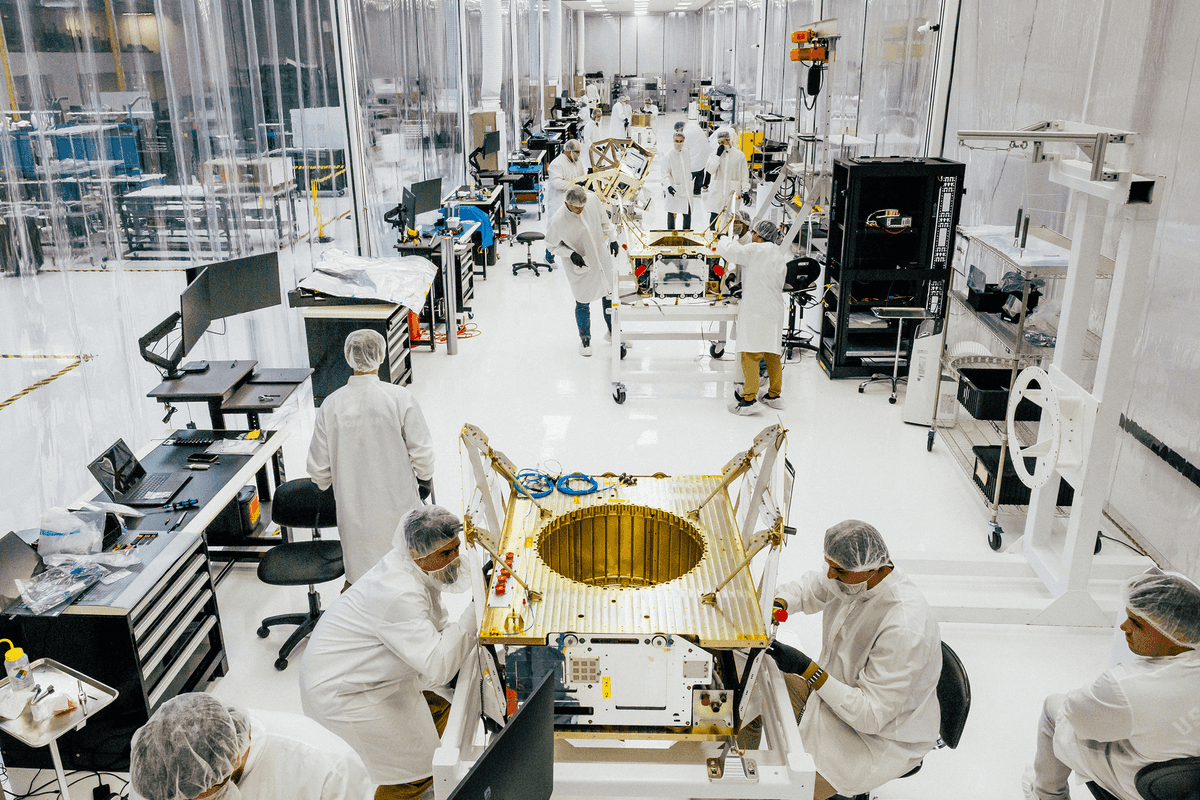

Image Source: Apex

Image Source: Apex

Image Source: Observable Space

Image Source: Observable Space