Dark Money Influencers Are Placing Political Ads on TikTok, Mozilla Says

Favot is an award-winning journalist and adjunct instructor at USC's Annenberg School for Communication and Journalism. She previously was an investigative and data reporter at national education news site The 74 and local news site LA School Report. She's also worked at the Los Angeles Daily News. She was a Livingston Award finalist in 2011 and holds a Master's degree in journalism from Boston University and BA from the University of Windsor in Ontario, Canada.

When TikTok proudly announced in 2019 that it would ban political advertisements on its platform, the social networking service was met with widespread praise. At a time when Facebook, Twitter, and Instagram refused to take a stand against political powers, many praised TikTok's commitment to neutrality.

Yet two years later, it's clear TikTok has succumbed to those forces as well: A report published Thursday by Mozilla suggests that misleading political ads and "dark money" have seeped onto the app.

A team of Mozilla researchers found that TikTok influencers in the U.S. are "being paid by political organizations to post content espousing their views," and that not all of these posts are disclosed as paid partnerships.

The report, titled "Th€se Are Not Po£itical Ad$: How Partisan Influencers Are Evading TikTok's Weak Political Ad Policies," charges TikTok with lax oversight and policies that contain loopholes that have enabled these posts to appear.

"TikTok does not effectively monitor and enforce its rule that creators must disclose paid partnerships," the researchers wrote, "nor does the platform proactively label sponsored posts as advertisements."

In response to the Mozilla report, TikTok released a statement on Thursday saying, "Political advertising is not allowed on TikTok, and we continue to invest in people and technology to consistently enforce this policy and build tools for creators on our platform. As we evolve our approach, we appreciate feedback from experts, including researchers at the Mozilla Foundation, and we look forward to a continuing dialogue as we work to develop equitable policies and tools that promote transparency, accountability and creativity."

The use of political ads on social media drew attention following the 2016 presidential election when it was revealed that Russia weaponized social platforms by spreading misinformation in an attempt to influence the election. Facebook banned political ads after the 2020 election "to avoid confusion or abuse following Election Day," but lifted the ban in March.

TikTok, however, said in 2019 that political advertising did not fit the app's "light-hearted and irreverent" experience. The Mozilla report suggests that TikTok has not only failed in this promise, but has eschewed the transparency mechanisms in place that other platforms have adopted in the wake of the attention on the dangers of political ads.

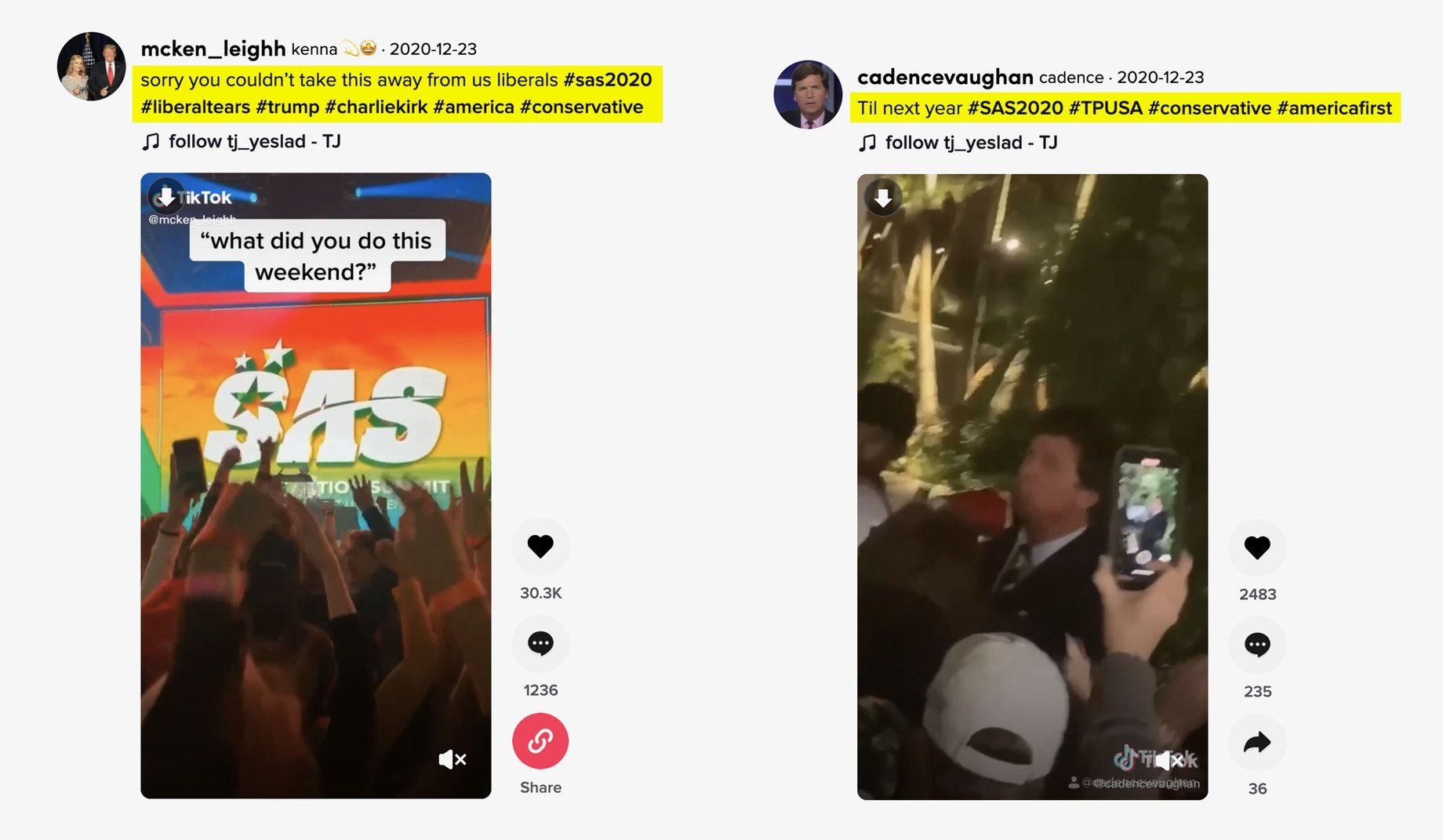

Mozilla found that several right-wing TikTok influencers appear to be funded by Turning Point USA, a tax-exempt nonprofit which has a dedicated influencer program intended to fund conservative content creators on social media.

The report points to one post by a TikTok user with 67,000 followers that appears to have been recorded at a Turning Point USA conference. The post features the hashtags #tpusa and #trump2020, and includes images of people in red Make America Great Again hats and holding Trump flags. It does not include a hashtag that indicates it is sponsored content.

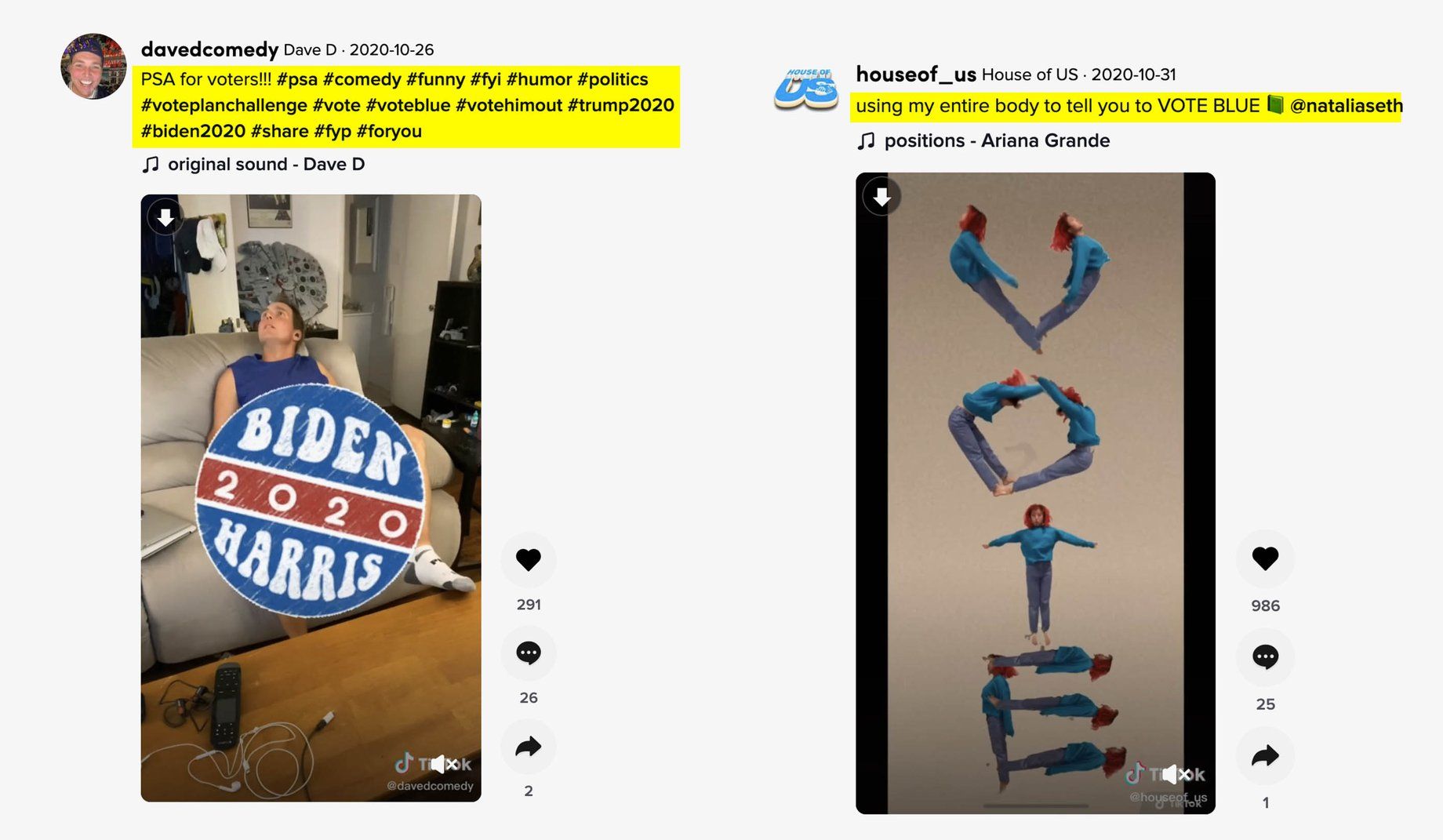

On the other end of the political spectrum, Mozilla pointed to a report by Reuters that found influencers were paid by a progressive PAC, The 99 Problems, to create pro-Biden TikTok posts without disclaimers.

According to the Mozilla researchers, TikTok doesn't enforce its rule that creators must disclose paid partnerships and it doesn't "proactively" label sponsored posts, saying it "doesn't seem to monitor influencer advertising."

Mozilla queried the TikTok Application Programming Interface to see the metadata associated with each TikTok post. Posts with advertiser-funded hashtag challenges were marked as advertising in the metadata, whereas influencer posts that used the hashtag #ad or #sponsored were not.

Like other social media services, the Federal Trade Commission requires advertising disclosures for social media influencers by using the hashtag #ad. Mozilla says other platforms are better at monitoring these advertisements by offering influencers "straightforward" ways to disclose their partnerships like checking a box on YouTube, disclosing the post is a sponsored video. Instagram also has a tool creators can use to mark their content as branded. (TikTok also differs from other social media platforms in that it doesn't have a publicly-searchable database of advertising data.)

"Of course, it's hard to know exactly how self-disclosure ad policies are being enforced across platforms but TikTok is significantly far behind Instagram and YouTube when it comes to providing tools and enacting clear, strict, and transparent policies," the Mozilla researchers wrote in the report.

The Mozilla researchers made recommendations to TikTok to address these issues it uncovered, such as developing mechanisms for creators to disclose paid partnerships, introducing an ad database that includes paid partnerships, and updating its policies and enforcement processes on political advertisements to ensure they address all ways that paid political influence occurs on the platform.

- Facebook Fails to Stop Spanish-Language Misinformation - dot.LA ›

- TikTok Under Scrutiny From Child Privacy Advocates - dot.LA ›

- TikTok a 'Direct Threat' to US Security, Justice Dept. Says - dot.LA ›

- TikTok Updates Content Rules and Guidelines - dot.LA ›

- Meta Reportedly Paid Consulting Firm to Target TikTok - dot.LA ›

- Proposed US Law Would Strip Social Media Giants of Secrecy - dot.LA ›

- TikTok Politics: Why More Candidates Are Turning to Video - dot.LA ›

- AI Still Has a Ways to Go Before it Truly Impacts Politics - dot.LA ›

Favot is an award-winning journalist and adjunct instructor at USC's Annenberg School for Communication and Journalism. She previously was an investigative and data reporter at national education news site The 74 and local news site LA School Report. She's also worked at the Los Angeles Daily News. She was a Livingston Award finalist in 2011 and holds a Master's degree in journalism from Boston University and BA from the University of Windsor in Ontario, Canada.

Image Source: Apex

Image Source: Apex

Image Source: Observable Space

Image Source: Observable Space