Will AI-Integrated Wellness Replace the 'Life Coach?'

This is the web version of dot.LA’s daily newsletter. Sign up to get the latest news on Southern California’s tech, startup and venture capital scene.

According to Bloomberg, Apple is developing an AI-powered health coaching service, along with new technology for tracking a user’s emotions. Apparently both of these systems will be integrated into the Apple Watch in the near future, enabling the company to monitor data on a user’s well-being in real time, feed that data into an artificially intelligent app, and then make practical suggestions, as a life coach or a counselor might.

At first, the app will invite users to log how they’re feeling and answer a few quick questions about their day; they can then track how these reports shift and change over time. But eventually, the hope is that the app will automatically detect how a user is feeling based on their voice and context clues, along with additional biometric data.

The Bloomberg article is tantalizingly light on specifics, but does mention that the project – codenamed “Quartz” – could theoretically help motivate Apple Watch wearers to “exercise, improve eating habits and… sleep better.” Which at least gives some early indications of what kinds of coaching the app aims to provide.

Basic tips and advice based on regularly updated user data is of course a logical extension of AI technology. Responding to prompts with simple bursts of basic information is what these apps do best. In some ways, it’s more appropriate to have an AI app keeping an eye on someone’s mood or glucose levels than writing poetry or movie scripts for them. It does, however, raise some concerns, both about potentially negative outcomes and overreach.

Apple, however, is certainly not the only company considering ways to use AI to improve health and wellness more generally. There are already a vast number of standalone wellness apps in stores today with some form of AI integration or another. The already-launched Youper app sounds very much like an early version of Apple’s Quartz concept; the app chats with users to determine how they’re feeling, and then provides customized suggestions to help them improve their mood. The Calm app remains one of the biggest and most visible players in the wellness space, though the San Francisco “unicorn” laid off around 20% of its staff last year.

And that’s just the tip of the iceberg:

- The AI-powered Chrome extension Breathhh monitors your online interactions and selects specific moments to interrupt you for some stress-relieving breathing exercises.

- MindDoc functions more as a practical lifecoach, helping users to design and follow through with plans for dealing with anxiety, insomnia, eating disorders, and other common problems.

- The Replika AI chatbot – already infamous on social media for its weird viral ads – allows users to create a digital avatar who “gets to know you” over time, then provides personal support and advice.

- Ladder is an AI-powered wellness app designed to help people better understand the connections between their actions and emotions; it was created specifically for and by people of color.

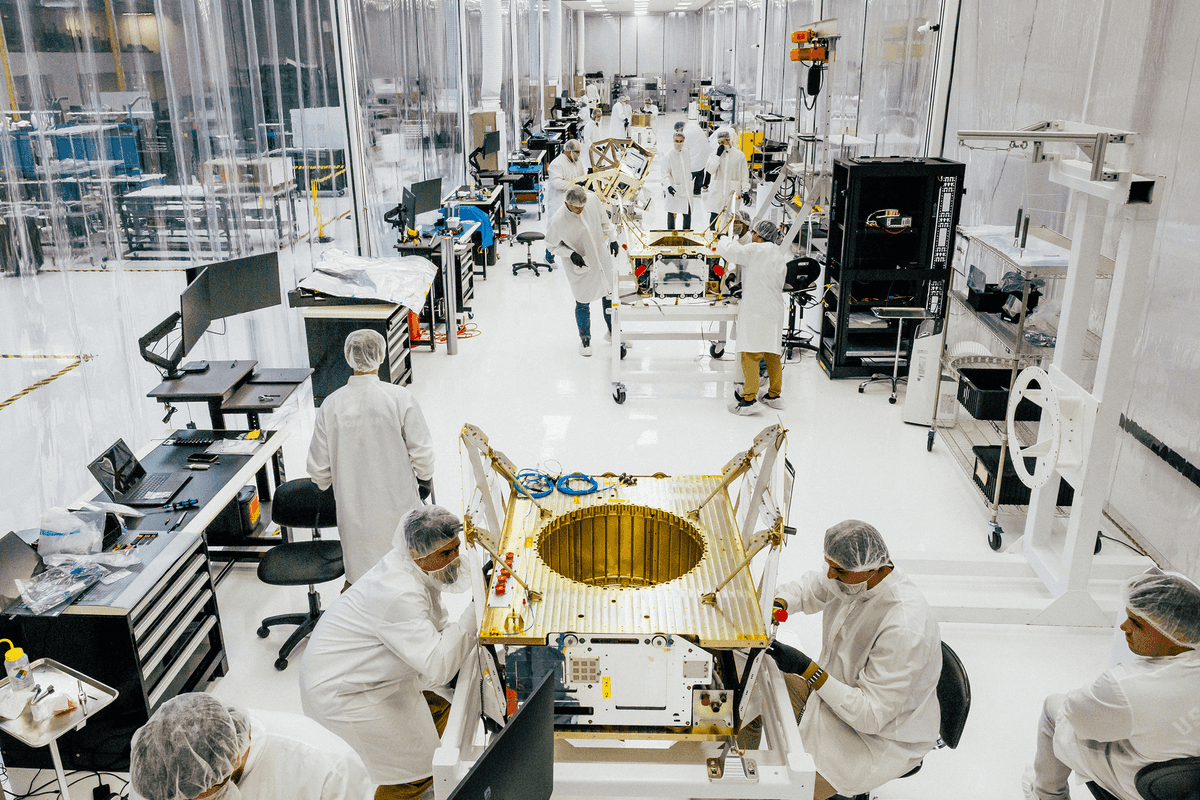

For it’s part, Los Angeles has a number of contributors to the AI wellness space, but biotech-friendly Southern California companies have tended toward more practical, behind-the-scenes approaches to integrating AI and health care, rather than the consumer-facing apps so popular in Silicon Valley, Austin, and New York.

- Entos of La Jolla, for example, uses AI for drug discovery, doing quantum molecular simulation to create novel pharmaceuticals.

- Kyan Therapeutics is utilizing similar techniques specifically to develop new cancer treatments.

- Meanwhile, NovaSignal uses artificially intelligent cerebral ultrasounds to non-invasively track blood flow to a patient’s brain.

That’s not to say there are no consumer-facing companies doing interesting things in LA with AI and wellness, too. Which brings us to Kabata Fitness, makers of the world’s smartest dumbbells. The company raised a $2 million round in 2022 with some notable investors, including Cleveland Cavaliers owner Dan Gilbert, Golden State Warriors consultant and former player Zaza Pachulia, AngelList founder Naval Ravikant, and Bonobos co-founder Andy Dunn.

“Smart dumbbells” sounds like a bit, but considering all the complexities and nuances around strength training, a set of weights that will instruct you in how to lift them has some innate appeal over a monthly gym membership. As well, there’s relatively little concern that your dumbbells will invade your privacy, or give you ill-considered advice. (Maybe other than “try to lift me.”) Perhaps “personal trainer” is another one of those jobs on the verge of being eliminated by clever machines.

- AI Is So Cool. Why Is The Conversation Around It So Dumb? ›

- How UCLA-Backed Biotech Firm Avenda Health is Using AI to Map Cancer Tumors ›

- Regard Raises $15M for AI-Powered Software That Help Doctors Diagnose Patients ›

- Two LA Startups Participate in Techstars' 2023 Health Care Accelerator ›

- Sensydia Raised $8 Million towards AI Heart Screening - dot.LA ›

- How Tony Robbins' LifeForce Plans to Overcome Aging - dot.LA ›

Image Source: Apex

Image Source: Apex

Image Source: Observable Space

Image Source: Observable Space